This is an old revision of the document!

Lab 05 - Plotting

Objectives

- Offer an introduction to Gnuplot

- Get you familiarised with basic plots in Gnuplot

Contents

Gnuplot Introduction

Gnuplot is a free, command-driven, interactive, function and data plotting program. It can be downloaded at https://sourceforge.net/projects/gnuplot/. The official Gnuplot documentation can be found at http://gnuplot.sourceforge.net/documentation.html.

It was originally created to allow scientists and students to visualize mathematical functions and data interactively, but has grown to support many non-interactive uses such as web scripting. It is also used as a plotting engine by third-party applications such as Octave.

The command language of Gnuplot is case sensitive, i.e. commands and function names written in lowercase are not the same as those written in capitals. All command names may be abbreviated as long as the abbreviation is not ambiguous. Any number of commands may appear on a line, separated by semicolons (;). Strings may be set off by either single or double quotes, although there are some subtle differences. See syntax (p. 44) and quotes (p. 44) for more details in the Gnuplot documentation (http://gnuplot.sourceforge.net/docs_5.0/gnuplot.pdf).

Commands may extend over several input lines by ending each line but the last with a backslash (\). The backslash must be the last character on each line. The effect is as if the backslash and newline were not there. That is, no white space is implied, nor is a comment terminated.

For built-in help on any topic, type help followed by the name of the topic or help ? to get a menu of available topics.

Tutorial

Tasks

01. [10p] Valgrind

Dynamic analysis tools can observe a running process and report memory-related issues that static analysis would miss entirely. In this exercise you will use Valgrind to detect memory leaks in a small C program – and get a first taste of the dynamic instrumentation concept that will be developed further in Task 04 with Intel Pin.

[5p] Task A - Writing a leaky program

Read the contents of leak.c and compile it:

$ gcc -g -o leak leak.c

The -g flag includes debug symbols so Valgrind can report exact file names and line numbers.

Now run it normally and observe that nothing seems wrong from the outside:

$ ./leak $ echo "exit code: $?"

[5p] Task B - Detecting leaks with Valgrind

Run the same binary under Valgrind's memory error detector:

$ valgrind --leak-check=full --show-leak-kinds=all ./leak

Examine the output and answer the following questions:

- How many bytes are reported as definitely lost? Does this match what you would expect from reading the source?

- What is the difference between definitely lost and indirectly lost in Valgrind's terminology?

- At what line number does Valgrind point as the origin of the leak? Why is that line significant rather than the line where the pointer goes out of scope?

- Re-compile without the

-gflag and run Valgrind again. What information is now missing from the report, and why?

On certain distributions such as CachyOS, you may get the following error:

valgrind: Fatal error at startup: a function redirection valgrind: which is mandatory for this platform-tool combination valgrind: cannot be set up. Details of the redirection are:

valgrind need the DWARF debug info for libc in order to function properly. If the ELF file itself doesn't have it, valgrind will try to use debuginfod find to download it using the Build ID stored in the .note.gnu.build-id section. If the debuginfod server doesn't have it either, your only hope of getting it to work is:

- recompiling glibc with debug symbols (out of the question)

- starting a docker container with Ubuntu, Debian, Arch Linux, etc.

02. [20p] Swap space

[10p] Task A - Swap File

First, let us check what swap devices we have enabled. Check the NAME and SIZE columns of the following command:

$ swapon --show

No output means that there are no swap devices available.

If you ever installed a Linux distro, you may remember creating a separate swap partition. This, however, is only one method of creating swap space. The other is by adding a swap file. Run the following commands:

$ sudo swapoff -a $ sudo dd if=/dev/zero of=/swapfile bs=1024 count=$((4 * 1024 * 1024)) $ sudo chmod 600 /swapfile $ sudo mkswap /swapfile $ sudo swapon /swapfile $ swapon --show

Just to clarify what we did:

- disabled all swap devices

- created a 4Gb zero-initialized file

- set the permission to the file so only root can edit it

- created a swap area from the file using mkswap (works on devices too)

- activated the swap area

The new swap area is temporary and will not survive a reboot. To make it permanent, we need to register it in /etc/fstab by adding a line such as this:

/swapfile swap swap defaults 0 0

[10p] Task B - Does it work?

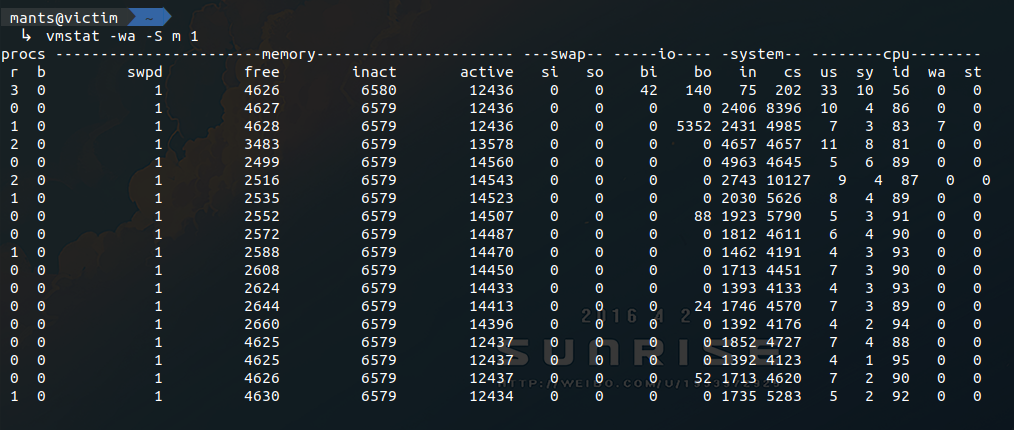

In one terminal run vmstat and look at the swpd and free columns.

$ vmstat -w 1

In another terminal, open a python shell and allocate a bit more memory than the available RAM. Identify the moment when the newly created swap space is being used.

One thing you might notice is that the value in vmstat's free column is lower than before. This does not mean that you have less available RAM after creating the swap file. Remember using the dd command to create a 4GB file? A big chunk of RAM was used to buffer the data that was written to disk. If free drops to unacceptable levels, the kernel will make sure to reclaim some of this buffer/cache memory. To get a clear view of how much available memory you actually have, try running the following command:

$ free -h

Observe that once you close the python shell and the memory is freed, swpd still displays a non-zero value. Why? There simply isn't a reason to clear the data from the swap area. If you really want to clean up the used swap space, try the following:

$ vmstat $ sudo swapoff -a && sudo swapon -a $ vmstat

Create two swap files. Set their priorities to 10 and 20, respectively.

Include the commands (copy+paste) or a screenshot of the terminal.

Also add 2 advantages and disadvantages when using a swap file comparing with a swap partition.

03. [30p] Kernel Samepage Merging

KSM is a page de-duplication strategy introduced in kernel version 2.6.32. In case you are wondering, it's not the same thing as the file page cache. KSM was originally developed in tandem with KVM in order to detect data pages with exactly the same content and make their page table entries point to the same physical address (marked Copy-On-Write.) The end goal was to allow more VMs to run on the same host. Since each page must be scanned for identical content, this solution had no chance of scaling well with the available quantity of RAM. So, the developers compromised to scan only with the private anonymous pages that were marked as likely candidates via madvise(addr, length, MADV_MERGEABLE).

Download the skeleton for this task.

[10p] Task A - Check kernel support & enable ksmd

First things first, you need to verify that KSM was enabled during your kernel's compilation. For this, you need to check the Linux build configuration file. Hopefully, you should see something like this:

# on Ubuntu you can usually find it in your /boot partition $ grep CONFIG_KSM /boot/config-$(uname -r) CONFIG_KSM=y # otherwise, you can find a gzip compressed copy in /proc $ zcat /proc/config.gz | grep CONFIG_KSM CONFIG_KSM=y

If you don't have KSM enabled, you could recompile the kernel with the CONFIG_KSM flag and try it, but you don't have to :)

Moving forward. Next thing on the list is to check that the ksmd daemon is functioning. Any configuration that we'll do will be through the sysfs files in /sys/kernel/mm/ksm. Consequently, you should change user to root (even sudo should not allow you to write to these files.)

- /…/run : this is 1 if the daemon is active; write 1 to it if it's not

- /…/pages_to_scan : this is how many pages will be scanned before going to sleep; you can increase this to 1000 if you want to see faster results

- /…/sleep_millisecs : this is how many ms the daemon sleeps in between scans; since you've modified pages_to_scan, you can leave this be

- /…/max_page_sharing : this is the maximum number of pages that can be de-duplicated; in cases like this it's better to go big or go home; so set it to something like 1000000, just to be sure

There are a few more files in the ksm/ directory. We will still use one or two later on. But for now, configuring the previous ones should be enough. Google the rest if you're interested.

[10p] Task B - Watch the magic happen

For this step it would be better to have a few terminals open. First, let's start a vmstat. Keep your eyes on the active memory column when we run the sample program.

$ vmstat -wa -S m 1

Next would be a good time to introduce two more files from the ksm/ sysfs directory:

- /…/pages_shared : this file reports how many physical pages are in use at the moment

- /…/pages_sharing : this file reports how many virtual page table entries point to the aforementioned physical pages

For this experiment we will also want to monitor the number of de-duplicated virtual pages, so have at it:

$ watch -n 0 cat /sys/kernel/mm/ksm/pages_sharing

Finally, look at the provided code, compile it, and launch the program. As an argument you will need to provide the number of pages that will be allocated and initialized with the same value. Note that not all pages will be de-duplicated instantly. So keep in mind your system's RAM limitations before deciding how much you can spare (1-2GB should be ok, right?)

The result should look something like Figure 1:

If you ever want to make use of this in your own experiments, remember to adjust the configurations of ksmd. Waking too often or scanning to many pages at once could end up doing more harm than good. See what works for your particular system.

Include a screenshot with the same output as the one in the spoiler above.

Edit the screenshot or note in writing at what point you started the application, where it reached max memory usage, the interval where KSM daemon was doing its job (in the 10s sleep interval) and where the process died.

[10p] Task C - Plot results

Now that you’ve observed the effects of KSM using vmstat, it’s time to visualize them. Solve the TODOs from plot.py from skeleton to generate a real-time plot that shows free memory, used memory, and memory used as a buffer over time, based on the freemem column from the output of the vmstat command.

If you get something resembling Could not load the Qt platform plugin “xcb” in ”” even though it was found. on either WSL or certain Linux environments (e.g., having Hyprland as a Wayland compositor), check out

this post.

04. [40p] Intel PIN

Broadly speaking, binary analysis is of two types:

- Static analysis - used in an offline environment to understand how a program works without actually running it.

- Dynamic analysis - applied to a running process in order to highlight interesting behavior, bugs or performance issues.

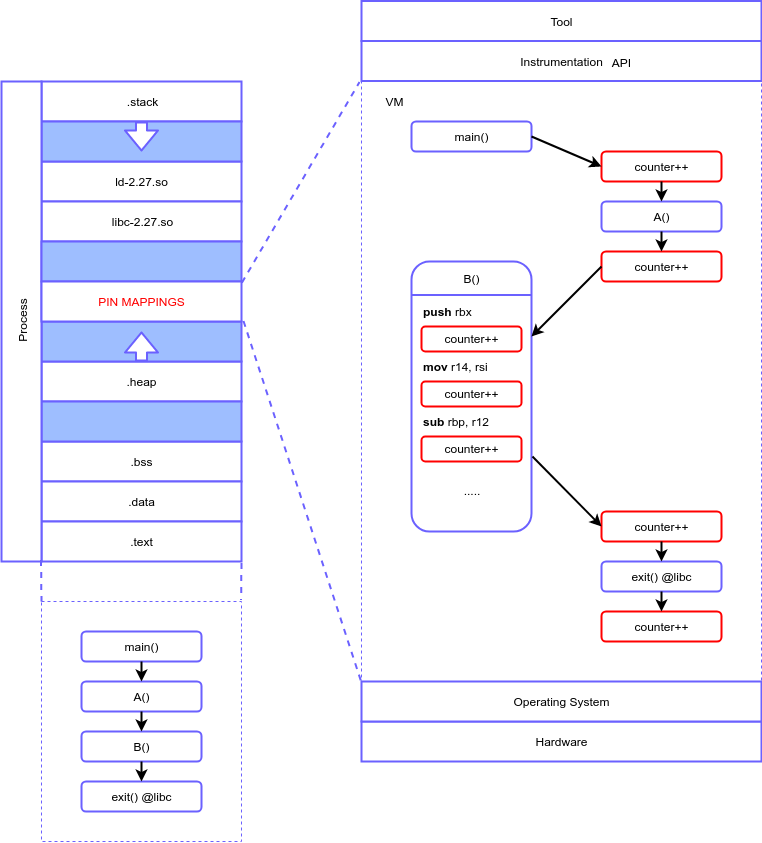

In case you are still wondering, in this exercise we are going to look at (one of) the best dynamic analysis tools available: Intel Pin. Specifically, what Pin does is called program instrumentation, meaning that it inserts user-defined code at arbitrary locations in the executable. The code is inserted at runtime, meaning that Pin can attach itself to a process, just like gdb.

Although Pin is closed source, the concepts that serve as its fundament are described in this paper. Since we don't have time to scan through the whole material, we will offer a bird's eye view of its architecture. Just enough to get you started with the tasks.

When a process is started via Pin, the very first instruction is intercepted and new mappings are created in the virtual space of the process. These mappings contain libraries that Pin uses, the tool that the user wrote (which is compiled as a shared object) and a small sandbox that will act as a VM. During the execution, Pin will translate the original instructions into the sandbox on an as-needed basis and, according to the rules defined in the tool, insert arbitrary code. This code can be inserted at different levels of granularity:

- instruction

- basic block

- function

- image

The immediate advantages should be clear. Only from a performance evaluation standpoint, a few applications could be:

- obtaining metrics from programs that were not designed with this in mind

- hotpatching bugs without stopping the process

- detecting the most accessed code regions to prioritize manual optimization

Although this sounds great, we should not ignore some of the glaring disadvantages:

- overhead

- this is highly dependent on the amount of instrumentation and the instrumented code itself

- overall, this seems to have a bit more impact on ARM than on other architectures

- volatile

- remember that the instrumented code shares things like the virtual memory space and file descriptors with the original process

- while something like in-memory fuzzing is possible, the risk of breaking the process is very high

- limited use cases

- Pin works directly on a regular executable (with native bytecode)

- Pin will not work (as intended) on interpreted languages and variations of these

In case you are wondering what else you can do with Intel Pin, check out TaintInduce. The authors of this paper wrote an architecture agnostic taint analysis tool that successfully found 24 CVEs, 17 missing or wrongly emulated instructions in unicorn and 1 mistake in the Intel Developer Manual.

For reference, use the Intel Pin 4.2 User Guide (also contains examples).

[5p] Task A - Setup

In this tutorial we will build a Pin tool with the goal of instrumenting any memory reads/writes. For reads, we output the source buffer state before the operation takes place. For writes, we output the destination buffer states both before and after.

Download the skeleton for this task. First thing you will need to do is run setup.sh. This will download the Intel Pin 4.2 framework into the newly created third_party/ directory and create a stable symlink at third_party/pin.

$ bash setup.sh

Next, open src/minspect.cpp in an editor of your choice, but avoid modifying the code. In between tasks, we will apply diff patches to this file. This will allow us to gradually build our tool and observe its behavior at different stages during its development. However, altering the source in any significant manner may cause the patch to fail.

Let us apply the first patch before proceeding to the following task:

$ patch src/minspect.cpp patches/Task-A.patch

If you get a lot of compilation errors, the easiest solution we have right now is to boot up an Arch Linux container and use g++ 15.2.1 instead of 13.3. Here's how you do it and how you install the dependencies once it's up and running:

[student@host ~]$ docker run -ti archlinux:latest # sync package database with remote server & install dependencies [root@arch ~]$ pacman -Sy [root@arch ~]$ pacman -S base-devel git wget neovim

After you clone the EP-labs repo, run setup.sh again and try to compile the project, you may still get one error regarding an undefined field called m_base. This is an error in their source code; just find the file and delete the m in m_base. That's why you have nvim installed ;)

[10p] Task B - Instrumentation Callbacks

Looking at main(), most Pin API calls are self explanatory. The only one that we're interested in is the following:

INS_AddInstrumentFunction(ins_instrum, NULL);

This call instructs Pin to trap on each instruction in the binary and invoke ins_instrum(). However, this happens only once per instruction. The role of the instrumentation callback that we register is to decide if a certain instruction is of interest to us. “Of interest” can mean basically anything. We can pick and choose “interesting” instructions based on their class, registers / memory operands, functions or objects containing them, etc.

Let's say that an instruction has indeed passed our selection. Now, we can use another Pin API call to insert an analysis routine before or after said instruction. While the instrumentation routine will never be invoked again for that specific instruction, the analysis routine will execute seamlessly for each pass.

For now, let us observe only the instrumentation callback and leave the analysis routine registration for the following task. Take a look at ins_instrum(). Then, compile the tool and run any program you want with it. Waiting for it to finish is not really necessary. Stop it after a few seconds.

$ make $ ./third_party/pin/pin -t obj-intel64/minspect.so -- ls -l 1>/dev/null

Just to make sure everything is clear: the default rule for make will generate an obj-intel64/ directory and compile the tool as a shared object. The way to start a process with our tool's instrumentation is by calling the pin util. -t specifies the tool to be used. Everything after -- should be the exact command that would normally be used to start the target process.

Note: here, we output information to stderr from our instrumentation callback. This is not good practice. The Pin tool and the target process share pretty much everything: file descriptors, virtual memory, etc. Normally, you will want to output these things to a log file. However, let's say we can get away with it for now, under the pretext of convenience.

Remember to apply the Task-B.patch before proceeding to the next task.

[10p] Task C - Analysis Callbacks (Read)

Going forward, we got rid of some of the clutter in ins_instrum(). As you may have noticed, the most recent addition to this routine is the for iterating over the memory operands of the instruction. We check whether each operand is the source of a read using INS_MemoryOperandIsRead(). If this check succeeds, we insert an analysis routine before the current instruction using INS_InsertPredicatedCall(). Let's take a closer look at how this API call works:

INS_InsertPredicatedCall( ins, IPOINT_BEFORE, (AFUNPTR) read_analysis, IARG_ADDRINT, ins_addr, IARG_PTR, strdup(ins_disass.c_str()), IARG_MEMORYOP_EA, op_idx, IARG_MEMORYREAD_SIZE, IARG_END);

The first three parameters are:

ins: reference to the INS argument passed to the instrumentation callback by default.IPOINT_BEFORE: instructs to insert the analysis routine before the instruction executes (see Instrumentation arguments for more details.)read_analysis: the function that is to be inserted as the analysis routine.

Next, we pass the arguments for read_analysis(). Each argument is represented by a type macro and the actual value. When we don't have any more parameters to send, we end by specifying IARG_END. Here are all the arguments:

IARG_ADDRINT, ins_addr: a 64-bit integer containing the absolute address of the instruction.IARG_PTR, strdup(ins_disass.c_str()): all objects in the callback's local context will be lost after we return; thus, we need to duplicate the disassembled code's string and pass a pointer to the copy.IARG_MEMORYOP_EA, op_idx: effective address of a specific memory operand; so this argument is not passed by value, but in stead recalculated each time and passed to the analysis routine seamlessly.IARG_MEMORYREAD_SIZE: size in bytes of the memory read; check the documentation for some important exceptions.

Take a look at what read_analysis() does. Recompile the tool and run it again (just as in task B). Finally, apply Task-C.patch and move on to the next task.

[10p] Task D - Analysis Callbacks (Write)

For the memory write analysis routine, we need to add instrumentation both before and after each instruction. The former needs to save the original buffer state while the latter displays the information in its entirety. Assuming that there are more than one memory locations that are written to, we push the initial buffer state hexdumps to a stack. Consequently, we need to add the post-write instrumentation in reverse order to ensure that the succession of elements popped from the stack is correct. Let's take a look at the pre-write instrumentation insertion:

INS_InsertPredicatedCall( ins, IPOINT_BEFORE, (AFUNPTR) pre_write_analysis, IARG_CALL_ORDER, CALL_ORDER_FIRST + op_idx + 1, IARG_MEMORYOP_EA, op_idx, IARG_MEMORYWRITE_SIZE, IARG_END);

We notice a new set of parameters:

IARG_CALL_ORDER, CALL_ORDER_FIRST + op_idx + 1,: specifies the call order when multiple analysis routines are registered; see CALL_ORDER enum's documentation for details.

Recompile the tool. Test to see that the write analysis routines work properly. Apply Task-D.patch and let's move on to applying the finishing touches.

[5p] Task E - Finishing Touches

This is only a minor addition. Namely, we want to add a command line option -i that can be used multiple times to specify multiple image names (e.g.: ls, libc.so.6, etc.) The tool must forego instrumentation for any instruction that is not part of these objects. As such, we declare a Pin KNOB:

static KNOB<string> knob_img(KNOB_MODE_APPEND, "pintool", "i", "", "names of objects to be instrumented for branch logging");

We should not use argp or other alternatives. In stead, let Pin use its own parser for these things. knob_img will act as an accumulator for any argument passed with the flag -i. Observe it's usage in ins_instrum().

Determine the shared object dependencies of your target binary of choice. Then try to recompile and rerun the Pin tool while specifying some of them as arguments.

$ ldd /bin/ls linux-vdso.so.1 (0x00007ffd0d19b000) libgtk3-nocsd.so.0 => /usr/lib/x86_64-linux-gnu/libgtk3-nocsd.so.0 (0x00007f32df3ad000) libselinux.so.1 => /lib/x86_64-linux-gnu/libselinux.so.1 (0x00007f32df185000) libc.so.6 => /lib/x86_64-linux-gnu/libc.so.6 (0x00007f32ded94000) libdl.so.2 => /lib/x86_64-linux-gnu/libdl.so.2 (0x00007f32deb90000) libpthread.so.0 => /lib/x86_64-linux-gnu/libpthread.so.0 (0x00007f32de971000) libpcre.so.3 => /lib/x86_64-linux-gnu/libpcre.so.3 (0x00007f32de6ff000) /lib64/ld-linux-x86-64.so.2 (0x00007f32df7d6000)

This concludes the tutorial. The resulting Pin tool can now be used as a starting point for developing a Taint analysis engine. Discuss more with your lab assistant if you're interested.

Patch your way through all the tasks and run the pin tool only for the base object of any binutil.

Include a screenshot of the output.

05. [10p] Feedback

Please take a minute to fill in the feedback form for this lab.

05. [20p] Gnuplot graphs

Using the Gnuplot documentation, implement a script that plots four graphs. Use the data from data4.txt as follows: the first graph should plot columns 1 and 2, the second columns 1 and 3, the third one columns 1 and 4, and the fourth one should be a 3D graph plotting columns 1, 2 and 3.

- Use different colours for the data in each graph.

- Remove the keys for each graph.

- Give a title to each graph.

- Give names to each of the axes: X or Y (or Z for the 3D graph). In the case of the 3D graph: Make the numbers on the axes readable, and correct the position of the names of the axes if these are displayed over the axes numbers.

- Make the script generate the .eps file containing your plot.

06. [30p] Gnuplot bar graphs

Use Gnuplot and the data from autoData.txt to generate separate bar graphs for the following:

- The ”MidPrice” of all the ”small” cars.

- The average fuel consumption (MPG - miles per gallon) for all the ”large” cars.

- The ”MaxPrice” over the average fuel consumption for all ”chevrolet” and ”ford” cars.

The graphs should be as complete as possible (title, axes names, etc.)

07. [10p] Feedback

Please take a minute to fill in the feedback form for this lab.